This is definitely going to change with time but this is the current vague, but slowly solidifying, plan for MacFeegle Prime, my competitor for PiWars 2020!

Concept

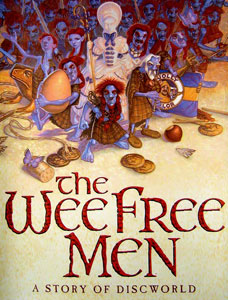

This robot is heavily inspired by Johnny Five from Short Circuit. To that end, it’ll be tracked, have a pair of arms, a head, and shoulder mounted nerf cannon. There will have to be lasers and blinkenlights in there somehow too! The demeanour and style of the robot will be very heavily influenced by the Wee Free Men from Terry Pratchett’s Discworld series… No, I’m not sure what that’s going to look like either but I’m looking forward to finding out!

Original Designer, Paul Kidby

Hardware

Unsurprisingly this will primarily run on a Rasbperry Pi, this will take data from the controller, sensors, and cameras then send appropriate control signals to the motors, servos and lights.

As he has a head, I was planning on using a pair of cameras. The “simple” option is just to stream these over wifi to a phone and use a Google Cardboard headset to get a 3d live stream from the robots perspective. Longer term I’ll be using OpenCV or similar to generate a depth map to allow for autonomous navigation and object detection. I was thinking of using two Pi Zeros with cameras attached, they could be dedicated to rescaling and streaming at different qualities for streaming to a HMD (head mounted display) or lower quality stream to another Raspberry Pi that could use OpenCV on them. In the end, I went with the Stereo Pi as they are designed for this very task! To that end, I’ve the Delux Starter kit on order that includes a Raspberry Pi 3 Compute Module and a pair of cameras with wide angle lenses.

Motors are controlled via an Arduino with a motor shield, in this case an Adafruit Feather and motor wing, and they are connected to a pair of PiBorg monsterborg motors and these are beasts! I started off with motors from an RC car and they rapidly hit the limit of what they could move. The robot already weighs 1.6KG…

For the arms I’ll be using servos, a whole bunch of them! I’m aiming for 7DOF arms, which is the same as humans, with the shoulder servors being a more powerful than the others as they’ve more weight to move around. The head and nerf cannon will also have a pair of servos for moving them around, the torso will need to be actuated too but I’m probably going to use a motor for that as it’ll left the whole weight of the robot from the waist up. To control all of these I’ve an Adafruit 16 servo hat, I may need another…

For sensors I’ve a load of ultrasonic sensors, inertial measurement units and optical sensors. The ultrasonic sensors will be good mounted aroudn the robot to get a decent set of returns to create a map from, the IMUs will be good to check if the motor is level and where it’s moving, and the optical sensors should be handy for line following. These will almost certainly be fused together via an Arduino. This means that the real time bits can be done on dedicated hardware and we don’t have to worry about timing issues on the Pi as it isn’t real time.

Software

I’m expecting to use Python for the lions share of the code for this, the Pi, StereoPi, and the servo hat I’m using have excellent support for this and I’ve a bit of experience with it already. The Arduinos run C and if I need to write something for the phone to enable a head up display I’m going to use the Unity games engine which uses C#. It’s what I use at work and I know both Unity and C# very well.

Construction

I’m using an aluminium plate for the base of the chassis and 3d printing custom components to mount to it, currently all hot glued in place with rapid prototyping/laziness…

Timescale

I’m close to having the drive part of this done, at least the first iteration, and I’m expecting that to be sorted over the next few weeks. There will no doubt be improvements over time so it’ll not be a one shot thing. For the torso, arms and head I’m aiming to get the first iteration of those sorted by the end of November. This will give me plenty of time to improve things and get the software written for next March too.

That’s it for now, it’s mostly a brain dump while I work on things in more detail.